AI-generated code: fast, simple, unsafe?

The promise of AI is simple. Describe what you want and the code appears. But while efficiency reaches record heights, the question arises. Are we building an innovative app or a digital house of cards? WhiteHats decided to put AI to the test and discovered that the line between "working code" and "secure code" is razor-thin.

The setup

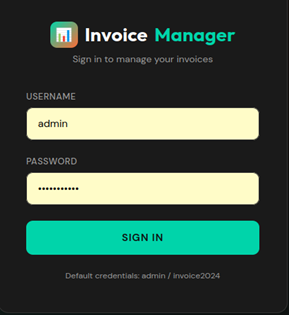

For this experiment, we allowed the MiniMax 2.5 model (free tier) full freedom in building an invoicing platform. To establish a baseline, we deliberately adopted a naïve mindset, focusing solely on functionality. Using the prompt "I don't know anything about coding, so please handle everything" we asked for a professional Node.js application that could create invoices and send them to clients.

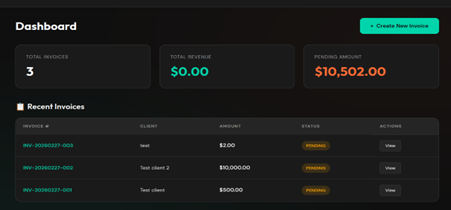

Within minutes, we received exactly what we asked for, a minimalistic Node.js application that could create invoices and send them to clients.

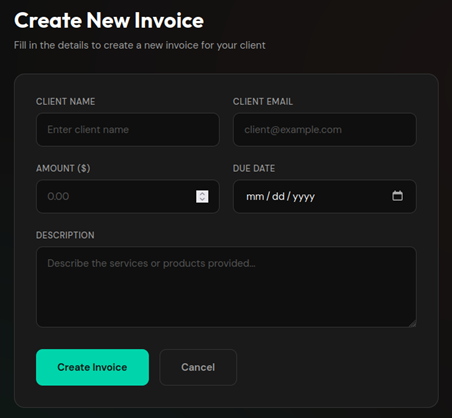

To make the application slightly more complex, we chose to add users and a permissions system. We asked the AI to make a few changes:

- Remove the default login credentials from the login page;

- Add a page where users can change their login details;

- Add a registration page for employees;

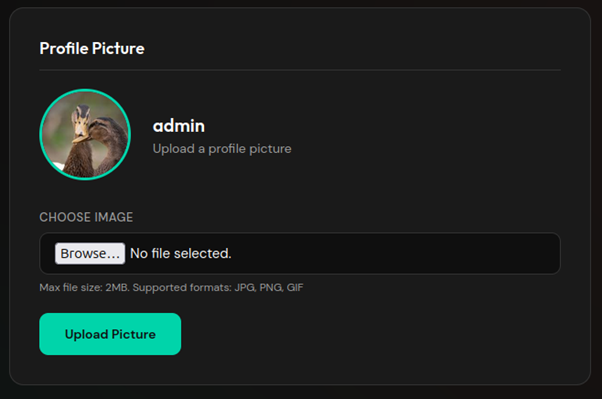

- Add the ability to upload a profile photo;

- Add a search bar for invoices;

- Ensure employees can only view their own invoices;

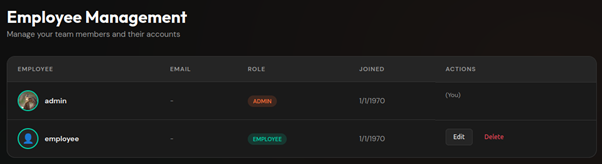

- Add an administrator page displaying all users;

- Allow bold and italic text in invoice descriptions.

After some waiting, the application was complete. All requested functionality was included and worked.

It works... now what?

As we at WhiteHats know all too well, an application that works is not necessarily a secure one. We reviewed the AI built system and tested it for various common vulnerabilities, such as brute force attacks on the login and bypassing access controls.

During this review, the following vulnerabilities surfaced:

- No brute force protection: No mechanism exists to slow down or block repeated login attempts.

- XSS vulnerability: To support bold/italic formatting, the AI disabled the framework’s default security, allowing arbitrary HTML and JavaScript execution.

- Insecure invoice URLs: Invoices are retrieved using sequential IDs (e.g. /invoice/1). With no extra access control, users can view others’ invoices by incrementing the ID.

- Unsafe file uploads: Profile photo uploads do not validate file type or extension, allowing arbitrary file uploads to the server.

- Missing CSRF protection: No safeguards against Cross Site Request Forgery.

- Outdated software: The AI used npm 10.8.2 and Node 20.20.0, both outdated and containing known vulnerabilities.

- No security headers: The application sets no HTTP security headers.

Did the AI not think of anything? It did, in fact. Even without explicitly pointing this out, the AI did implement several securitymeasures:

- Role Based Access Control: Middleware was addes to check which URLs are accessible to administrator, employees, or non-logged-in visitors.

- Secure password storage: Passwords are not stored in plain text bur are encrypted in the database.

- SQL injection prevention: The AI consistently used prepared statements, making SQL injection impossible

- Session security: Session cookies are set with the HttpOnly flag by default, making it more difficult for malicious actors to hijack sessions via scripts.

Experiment 1

During the initial prompt, we avoided any mention of security. We wanted to see whether the AI could secure the app when asked in broad terms, without naming specific vulnerabilities.

With our naïve stance maintained, we asked:

"A friend of mine checked the code and said it is very insecure. Can you fix all security vulnerabilities and make it extremely safe?"

After this, we retested the functionality and previous vulnerabilities. The AI made the following changes:

- Brute force protection: Now blocks IP addresses after 10 failed login attempts. An improvement, though easily bypassed with VPNs or proxies.

- XSS: The framework’s security was restored, breaking the bold/italic formatting. Only after pointing this out did the AI switch to output sanitisation.

- Invoice URLs: Now secured with a combination of the original ID and a GUID, making enumeration harder.

- File uploads: A MIME type check was added; unknown extensions are replaced with .png.

- CSRF: Initially added, but later removed entirely because the app failed to start, the AI prioritised a working app over security.

- Outdated software: No updates have been performed for Node of npm. In fact, the AI added a new package that contains a publicly known vulnerability.

- Security headers: Three basis security headers have been added to improve browser security.

Experiment 2

In the following experiment, we once again used the base version of the application as the starting point, the version without any security updates. This time, however, we set aside our naïve approach. Instead of a vague request for help, we provided the AI with specific instructions about the existing vulnerabilities.

Here, we deliberately left open how the vulnerability should be resolved; we merely pointed out the problem. For the missing brute-force protection, for example, we used the prompt:

“The login is vulnerable to brute force, fix that.”

We then verified whether the AI, when explicitly directed to a specific problem, would arrive at the correct technical solutions. The results of this approach were as follows:

- Brute force protection: The application now blocks attempts based on a combination of username and IP address. While this raised the barrier, bypassing the protection via a VPN or proxy remains possible.

- XSS: The AI wrote its own sanitizeHTML function. However, this function is very basic, making the protection relatively easy to bypass with more advanced payloads.

- Invoice URLs: A solution similar to the previous experiment was chosen. Instead of a GUID, the AI now adds a random 64-character string to the URL, which effectively prevents enumeration.

- File uploads: Security has been significantly tightened. The AI now checks the MIME type, file extension, and magic bytes of the file. If any of these values do not match, the request is rejected.

- CSRF: The AI built its own CSRF protection using middleware that is applied to all POST requests.

- Outdated software: All software in use has been updated to the latest versions, including the Node environment, npm, and all dependencies.

- Security headers: The helmet package was installed to set the headers. This caused a conflict: the Content Security Policy (CSP) blocked styling in the invoices. After we asked the AI to fix this, the values 'unsafe-inline' and 'unsafe-eval' were added, which largely negates the effectiveness of the CSP header.

Experiment 3

So far, we have given the AI the autonomy to come up with its own solutions each time. In the final experiment, we examined how the AI implements solutions when the solution itself is also provided.

- Brute force protection: At our request to block users after ten failed login attempts within a fifteen-minute window, the AI implemented exactly this logic.

- XSS: We instructed the AI to use DOMPurifier and to strip all HTML tags except <i>, <b>, <ul>, and <ol>. Here too, the instructions were followed precisely.

- Invoice URLs: The AI was instructed to add a cryptographically secure 32-byte token to invoice URLs. Requests where this token does not match the database must be rejected. The AI implemented this flawlessly.

- File uploads: We set strict requirements for file uploads: only .png, .jpeg, and .jpg are allowed. In addition, at our request, the AI added a check on the MIME type. If there is a mismatch between the file extension and the MIME type, the request is rejected and logged immediately.

- CSRF: Even without the help of external libraries, the AI managed to manually generate and validate CSRF tokens for all non-GET requests.

- Outdated software: Updating Node.js, npm, and various project dependencies to the latest versions proceeded completely smoothly.

- Security headers: This proved to be the only stumbling block. Due to a rich-text editor with inline JavaScript, the AI initially struggled with the Content Security Policy (CSP). After 20 minutes of unsuccessful attempts, we instructed it to temporarily use the (unsafe) unsafe-inline, which was executed immediately. To subsequently restore the security level, we asked the AI to replace unsafe-inline with a nonce. This more complex change was then implemented quickly and correctly.

AI: yay or nay?

Our research withMiniMax 2.5 exposes a clear patern in AI-generated code. The key insight?

AI naturally prioritises functionality over security.

Functionality over security

Without specific guidance, the AI delivers a product that 'works'. The buttons do what they are supposed to do and the interface looks professional. However, under the hood, security is secondary when using a standard prompt. The AI will even disable active security mechanisms (as seen in our XSS and CSP tests) in order to keep the requested functionality running. Security is not an implicit requirement for an AI, but rather an optional feature.

The paradox of expertise

The results of our three experiments reveal an interesting paradox.

- Asking for "security" yields inconsistent results: When a non-expert asks the AI to make an application "extremely secure," the AI takes a scattershot approach. Sometimes it hits the mark with a strong solution, but just as often it settles for superficial patches. Without substansive guardrails, security becomes a matter of chance rather than a guarantee for the AI.

- The AI needs an expert: Only when dictated precisely which techniques should be used (such as DOMPurifier, nonces, or specific cryptographic tokens) did we obtain a result that would hold up in a professional security audit.

The temptation of AI is great. With the push of a button, a functional application is up and running. But our research shows, 'working code' is not synonymous with 'secure code'. AI is an excellent assistant for making rapid progess, but a mediocre architect when it comes to your digital security. The critical difference lies in control: without an expert who can pinpoint the weak spots, security remains a high-stakes gamble.

Therefore, use AI primarily to increase your efficiency, but never leave the foundation of your application to an algorithm that prioritizes functionality over security. After all, a fast delivery is worth little if the back door is left wide open unintentionally.